Introduction: Why Robots.txt File Is Important

In today’s digital world, search engines constantly crawl websites to understand and rank them. However, not every page on your website needs to be indexed. Some pages may be private, duplicate, or not useful for search results. This is where a Generate Robots.txt Files SpellMistake file becomes very important.

A robots.txt file helps you control how search engines interact with your website. It tells search engine bots which pages they can access and which they should ignore. Without it, search engines may crawl unnecessary pages, which can affect your SEO performance.

The Generate Robots.txt Files SpellMistake tool makes this process simple and fast. You do not need coding skills or technical knowledge. In this guide, you will learn everything about robots.txt files and how to create them easily in 2026.

What Is a Robots.txt File?

A robots.txt file is a simple text file placed in your website’s root directory. It provides instructions to search engine bots about which pages they can crawl.

For example, you can block certain pages like admin panels, login pages, or duplicate content. This helps search engines focus only on important pages.

Robots.txt works like a rulebook. It guides search engines on how to interact with your website.

Using the Generate Robots.txt Files SpellMistake tool, you can create this file easily without writing code manually.

How Robots.txt Helps in SEO

Robots.txt plays a key role in improving your SEO performance. It helps search engines crawl your website more efficiently.

By blocking unnecessary pages, you can save crawl budget. This means search engines will focus on your important pages instead of wasting time on irrelevant ones.

It also helps avoid duplicate content issues. When duplicate pages are blocked, your main content gets better visibility.

With the Generate Robots.txt Files SpellMistake, you can optimize your robots.txt file and improve your website rankings.

Key Features of Generate Robots.txt Files SpellMistake

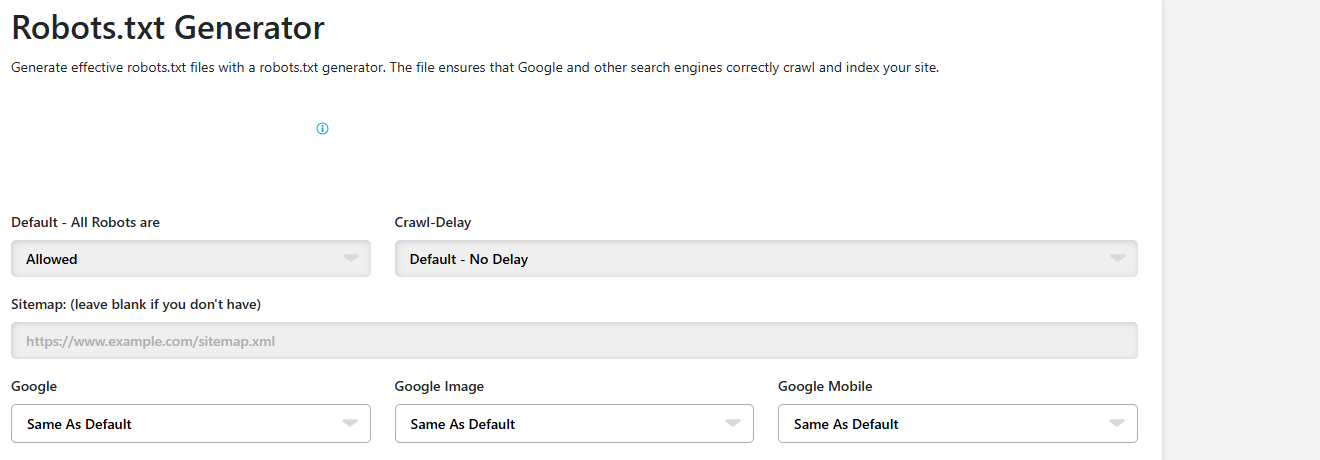

The Generate Robots.txt Files SpellMistake tool offers several useful features. It is fast and generates your robots.txt file instantly.

The tool is very easy to use. You do not need technical skills to create your file.

It allows you to customize rules according to your needs. You can allow or block specific pages easily.

Another important feature is accuracy. The tool ensures that your file is correctly formatted and ready to use.

How the Tool Works in Simple Steps

Using the Generate Robots.txt Files SpellMistake is very simple. First, open the tool in your browser.

Then, enter your website details and choose the pages you want to allow or block. The tool will guide you through the process.

After that, click on the generate button. The tool will create your robots.txt file instantly.

Finally, download the file and upload it to your website’s root directory. This ensures that search engines can access it.

Step-by-Step Process to Create Robots.txt File

Follow these simple steps to create your robots.txt file:

- Open the Generate Robots.txt Files SpellMistake tool.

- Enter your website URL.

- Select which pages you want to allow or block.

- Click on the generate button.

- Download the robots.txt file.

- Upload the file to your website root folder.

- Test the file using search engine tools.

This process helps you create a proper robots.txt file quickly and easily.

Common Mistakes to Avoid in Robots.txt

Many website owners make mistakes while creating robots.txt files. One common mistake is blocking important pages. This can prevent search engines from indexing your content.

Another mistake is incorrect formatting. Even a small error can make the file useless.

Blocking CSS or JavaScript files can also affect your website performance.

Using the Generate Robots.txt Files SpellMistake helps avoid these mistakes by providing correct structure and guidance.

Best Practices for Robots.txt Optimization

To get the best results, follow some simple practices. Always allow important pages so they can be indexed.

Block only unnecessary or duplicate pages. Keep your file simple and clean.

Update your robots.txt file regularly when you make changes to your website.

Use the Generate Robots.txt Files SpellMistake to ensure your file is always optimized and error-free.

Robots.txt Rules and Their Purpose

| Rule Type | Example | Purpose |

|---|---|---|

| Allow | Allow: /blog | Allows access to blog pages |

| Disallow | Disallow: /admin | Blocks admin pages |

| User-agent | User-agent: * | Applies rule to all bots |

| Sitemap | Sitemap: URL | Helps bots find sitemap |

| Crawl-delay | Crawl-delay: 10 | Controls crawl speed |

How Robots.txt Improves Website Performance

Robots.txt helps improve website performance by controlling how search engines crawl your site.

By blocking unnecessary pages, it reduces server load. This improves website speed and efficiency.

It also helps search engines focus on important content. This improves indexing and ranking.

Using the Generate Robots.txt Files SpellMistake, you can create an optimized file that enhances your website performance.

Importance of Testing Robots.txt File

After creating your robots.txt file, it is important to test it. Testing ensures that your rules are working correctly.

You can use tools like Google Search Console to test your file. This helps you identify errors and fix them.

Testing prevents mistakes that could harm your SEO.

The Generate Robots.txt Files SpellMistake helps create accurate files, but testing is still important for best results.

Future of Robots.txt in SEO

In the future, robots.txt will continue to play an important role in SEO. As websites become more complex, controlling crawl behavior will become more important.

Search engines will rely more on structured data and proper website management.

Tools like Generate Robots.txt Files SpellMistake will become even more useful for website owners.

Staying updated with SEO trends will help you use robots.txt effectively.

Conclusion

The Generate Robots.txt Files SpellMistake tool is a powerful and easy way to control how search engines interact with your website. It helps you create a proper robots.txt file quickly and without errors.

A well-optimized robots.txt file improves crawling, indexing, and SEO performance. It ensures that search engines focus on your most important pages.

In 2026, managing your website properly is essential for success. Using this tool regularly will help you stay ahead and maintain a strong online presence.